Next webinar Registration

Despite recent tendencies towards building large "monolithic" neural models, fine-tuned expert models and parameter-efficient specialised modules still offer gains over large monoliths in specific tasks and for specific data distributions (e.g., low-resource languages or specialised domains). Moreover, such modularisation of skills and expertise into dedicated models or modules allows for asynchronous, decentralised, and more efficient continuous model development, as well as module reusability. However, a central question remains: how to combine and compose these modules to enable positive transfer, sample-efficient learning, and improved out-of-domain generalisation. In this talk, after discussing the key advantages of modularisation and modular specialisation, I will provide an overview of prominent module and model composition strategies. I will focus on composition at the parameter level (model merging) and functional level (model MoErging), and then illustrate the usefulness of these techniques across several applications.

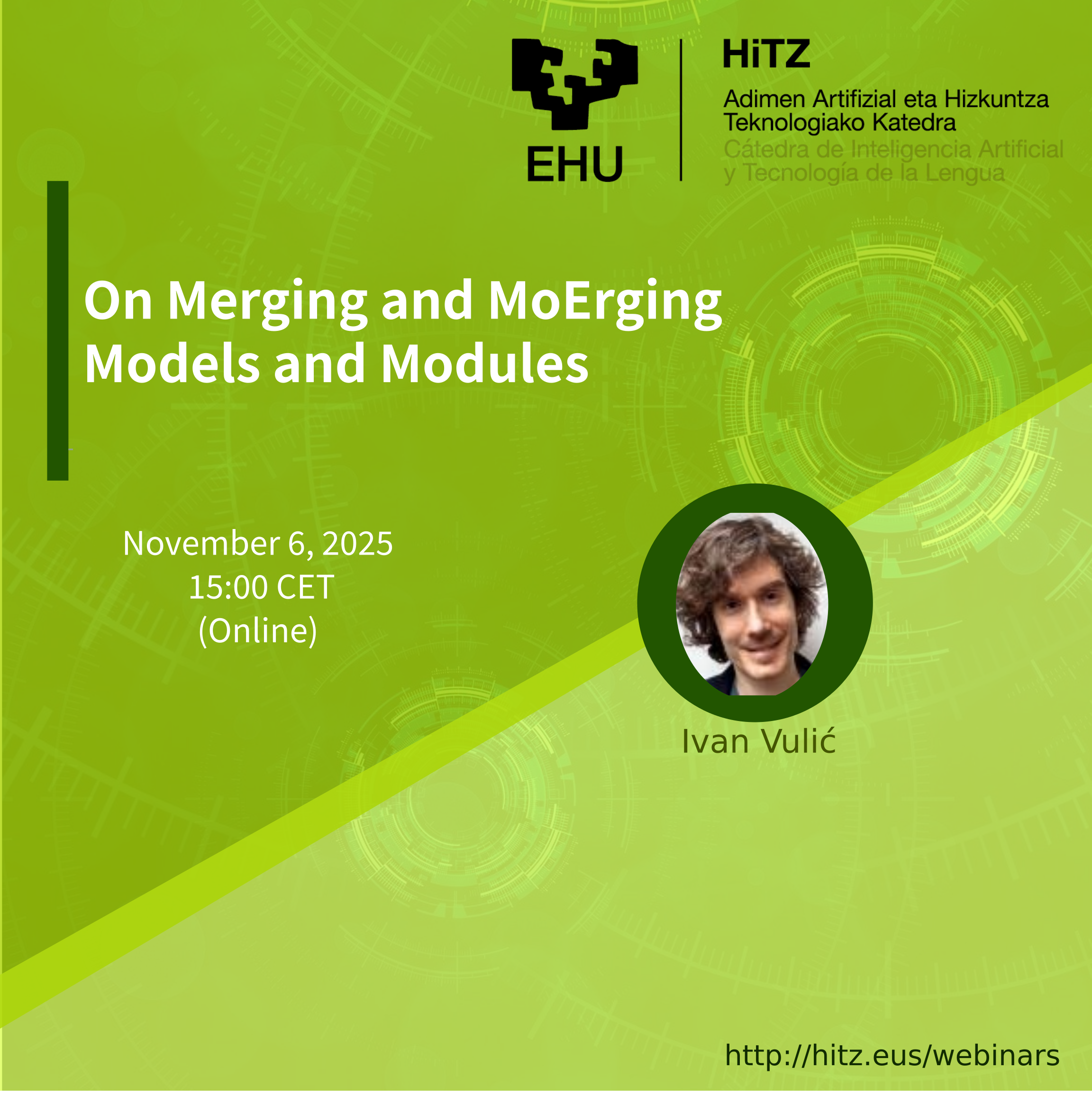

Ivan Vulić is currently a Research Scientist at Google DeepMind in Zurich after spending a year there as a Visiting Researcher. Before that he was a Research Professor and a Royal Society University Research Fellow in the Language Technology Lab, University of Cambridge, where he spent 10 years across different research roles. From January 2018 until November 2024 he was also a Senior Scientist at PolyAI in London. Ivan holds a PhD in Computer Science from KU Leuven awarded summa cum laude. In 2021 he was awarded the annual Karen Spärck Jones Award from the British Computing Society for his research contributions to Natural Language Processing and Information Retrieval. His core expertise and research interests span, among others, cross-lingual, multilingual and multi-modal representation learning, modularity and composability of ML models, sample-efficient, parameter-efficient and few-shot ML, conversational AI, data-centric ML.

If you are interested in attending this webinar, please fill out the form below.